All that theory I already knew.

Sorry, but from your replies this is not always absolutely clear to me – and then there might be others coming across this thread who may actually find it useful to get the full explanations posted here. I don’t mean to bore anybody, but still prefer to provide complete information.

So for this posting I apologize in advance that I will write a lot of things that you probably know already

The "issue" I have with the results I found (or with not having 1 LSB level 0-offset self-calibration) is that the scope obviously knows how to adjust offset at the 1LSB resolution (as proven with the frozen sweep position adjustment and GND-coupled input), but the normal input samples are not behaving the same.

These are totally different scenarios. Whenever the acquisition is stopped (like after a Single Trigger event), the DC-offset cannot control the trace position anymore. Now moving the trace on the screen is a pure graphical task.

The same applies for GND coupling. This is no physical shorting of the scope input as it has been on old analog scopes. It is just software and the (zero) trace is shown at the position where it is supposed to be according to the offset setting, hence the internal DC offset voltage cannot have any impact. There have been folks wondering why the offset is different between GND-coupling and actually shorting the screen input at high input sensitivities, and this is the answer to it.

My addition to the theory section:

As long as there is headroom in the ADC (e.g. showing only 200 value range with 8-bit ADC which has total range of 256 steps), the "fine-tuning" of the offset (to within ADC's resolution, or actually even better than that if samples have enough bits) can be done with software/digital. (It can be done even with no ADC headroom, but then either the top or bottom end would have a bit of clipping.)

As an example: say, having settings where ADC resolution would correspond to exactly 1mV, but offset system provides only 10mV resolution, and we would like 6mV offset. If the system then chooses the closest analog offset (10mV), the ADC gives values that are 4mV off the mark (too high offset). But the system knows that, so it can just subtract 4mV offset from every sample in digital/software, and now the final samples are offset by the desired 6mV.

You are right, it

could be done that way – on a very different scope that is, one that would be slow like a turtle.

Fact is, however, that this is not the case. The full ADC range is used for signal acquisition only, nothing of that is sacrificed for calibration and/or fine-adjust purposes.

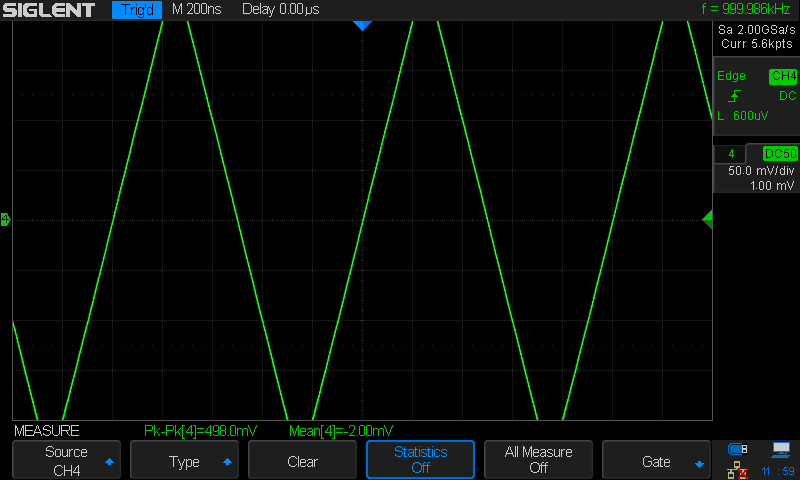

Here’s the proof: a 500mVpp signal is too high in amplitude to fit on the screen at a 50mV/div vertical gain setting.

SDS2304X_Daynamic_500mVpp_50mV

Note that the peak to peak measurement is still correct (within tolerances), even though the screen height is only 400mV.

If we stop the acquisition and turn up the vertical gain setting to 100mV/div, we can see the full waveform, nothing misaligned, nothing clipped:

SDS2304X_Daynamic_500mVpp_100mV

So, this scope does know how to do the math, as it can do the same software offset adjustment with the frozen sweep samples (single-triggered), but it seems it is not doing it with the incoming sample data flow. And because it has not corrected the incoming data, the shown values both in the running waveforms (except with GND-coupled input) and in the single-triggered frozen sweep can be also slightly wrong. In single-trigger view it is only calculating the "fine-tuned" offset change on top of the non-corrected sample data, it does not apply the correction at any point, even during this post-processing where it would have all the time it needs and then some.

The scope does not do any adjustments when in Run mode. There is no time for that either. It just collects ADC samples in the sample memory and then flushes out the whole bunch to the display every 40 milliseconds. The SDS2000(X) does manipulate the sample data in Average and Eres acquisition modes though, and this limits the usable Sample memory to a total of 28k and slows down the waveform update speed to something close to the screen update rate on top of that.

Note: this is different for the newer SDS1000X-E models, which do it in hardware and can use deep memory and reach full speed in these modes.

There is also no point in modifying the data in Stop mode all of a sudden. The scope just shows the very last acquisition in this mode, just as it has been displayed during Run (there it was not alone but together with numerous previous acquisitions).

Only when you alter the vertical position in Stop mode, it does some math to reposition the trace to where it’s supposed to be with the new offset – and usually the assumptions are correct, so you don’t see any major jump when starting Run mode again.

In any case, the shown samples do not have the fine-step offset shown to user (except in every 1 in 6 offset or so, EDIT: and no way to know which one of those 6 steps would give a physical offset that matches the shown offset) (and ignoring absolute accuracy, only considering the relative accuracy and resolution). Either the scope should apply the corrective math (one signed 8-byte addition operation per sample) or let the user only adjust the offset with the actual resolution available (since it apparently can not be controlled as accurately as let to believe anyway). (I am also aware that likely the best offset step size in those lowest ranges would be about double of what the steps are doing now in 2mV/div sensitivity, I'd be fine with that.) Both solutions would end with samples with correct offset; one needs a tiny bit more calculations and gives smoother offset control, the other with visibly coarser offset control but matching what the analog side can actually do.

Yes, now we’re talking about a change request.

I agree that it would be better to display the actual values, but I think I can understand why it is the way it is now. The UI is kind of universal and defines a certain resolution for the offset control. Some low level driver has to translate that request to the actual hardware, which is just not capable of fulfilling it at the very high sensitivities in this particular case. The UI doesn’t know that and it would not be just a small change to implement that. A new API would be required for the UI to query the driver about the capabilities of the hardware and then the proper restrictions have to be applied. But at least it would not be out of the question to do that.

The other approach though, applying some software processing during run to make up for insufficient DAC resolution, is clearly not going to happen – I can smell that in advance

(Note that the corrective addition does not need to be done with every single input sample, but only for shown samples, but then everything else needs to consider this dualism of having different "effective input sample offset" and "higher resolution visual offset". E.g. trigger levels would need to work with the same effective offset as the input data is being handled, but the levels must be shown in the same "visual offset" as the samples are shown with. So, the added complexity of such solution would be just asking for more bugs... Adding an offset correction to every simple input data is simple, but it does need that one calculation, and there can be quite a number of samples coming in, is there enough processing resources in the system...)

There are no samples not showing on the screen. This is one of the points of the Siglent X-series scopes, that they don’t hide any real data and users can always see everything that has been captured at a glance. A single video frame on the screen, updated every 40 milliseconds, contains up to thousands of trigger events, and the entire sample memory is cramped into that display. Yes, many samples will overlap that way, but you’ll never miss a peak or glitch as long as the effective sample rate is high enough to capture it in the first place, and this is also why intensitiy grading works so well with these scopes.

You have just identified a number of impacts that a software correction of the input offset would have on other areas, like triggering and measurements, so it is not just an easy coffee-break change. But the biggest argument against such an implementation would be the impact on performance, as already stated before. Post processing every single Sample in a scope that can use up to a total of 280Mpts (with both ADCs active) is just not going to happen.

An additional question is related to that GND-coupled input. How can its offset be controlled in that 1LSB resolution? I'd have assumed that it would be simply a switched connection to ground at the AFE input (or similar), and thus should have the exact same normal analog side processing. Showing the internal noise and offset calibration errors, and those ~3LSB jumps with offset adjustment. But since GND-coupled input offsetting moves every 2 steps (1 LSB), something is very much different for them. Is the GND switched to the path after the analog offset injection and given all of the offset with digital "correction"? Or even later, just before ADC (as it does not need any gain either)? Or simply digital simulation (with tiny bit of simulated noise on it)?

I’ve already mentioned that at the beginning. Since the data from the acquisition are ignored in this coupling type, the scope has nothing to correct. It just draws a line at the position that is set by the vertical position control. It’s a pure graphical operation.

I for one don’t see any noise in GND mode, not even at the zoomed 1mV/div gain setting. But I admit that I do not know where exactly the switch is implemented. From some experiments, my first suspicion would be that simply the data transfer between ADC and sample buffer is stopped.