EDIT: Inline pictures didn't seem to work.. guess they are at the bottom of the post...

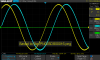

I think I found some problems/strangeness with phase and amplitudes when comparing two signals, depending on which channels are being used.

I just got an SDG2042X AWG (now unlocked for 120 MHz sine), and was messing around measuring the phase difference of two dissimilar BNC cables on my SDS1104X-E scope (unlocked to 200 MHz).

Can someone advise me on whether I'm seeing a problem with just my scope, or is it with the model/firmware in general?

Basically, I am seeing incorrect phase and amplitudes measurements when comparing two signals, depending on which channels are enabled and at what timebase is set. There are big differences depending on if I'm using two channels from the same ADC, or one channel from each ADC. For example, using chans 1 and 3, the phase difference is wrong by about 40 degrees (at 120 MHz sine) from what it should be -- at least, what I assume it should be.

The issues occur when directly viewing the waveforms and are also reflected in Bode plots done on the scope.

Test setup:

AWG - Both channels set to 120 MHz sine wave, tracking on, HiZ.

Using two different BNC cables with a slightly different lengths, without 50 Ohm terminators (just waiting for ebay order to arrive).

(One cable came with the AWG, the other is a PICO MI030)

Here are two BodePlot II plots demonstrating the difference while just changing which scope input channels are connected and enabled.

Case 1:

Case 1:Using channels 1 and 3, vertical scale @ 500 mV/div, averaging on

1 ns/div: 33.88 degrees, 780.00 ps skew

2 ns/div: 34.56 degrees, 780.00 ps skew

5 ns/div: 38.14 degrees, 883.33 ps skew, 3.32 Vpp

10 ns/div: 38.07 degrees, 880.95 ps skew, 3.32 Vpp

20 ns/div: 38.39 degrees, 846.15 ps skew, 3.32 Vpp

50 ns/div: 72.09 degrees, 1.68 ns skew, 3.32 Vpp

100 ns/div: 72.11 degrees, 1.68 ns skew, 3.32 Vpp

200 ns/div: 72.22 degrees, 1.68 ns skew, 3.32 Vpp

500 ns/div: 72.22 degrees, 1.67 ns skew, 3.32 Vpp

1 us/div: 72.22 degrees, 1.68 ns skew, 3.32 Vpp

<etc> <etc> <etc> 3.32 Vpp

200 us/div: <n/a>, <n/a>, 3.66 Vpp * Sample rate drops to 500 MSa/s at this timebaseTo get a more steady phase result I turned on averaging over 1024 samples in the Acquire menu, but the same changes across timebases are observed without averaging.

Case 2:

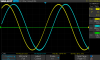

Case 2:Using channels 1 and 2, vertical scale @ 500 mV/div, averaging on

1 ns/div: 32.81 degrees, 720.00 ps skew, 3.66 Vpp

2 ns/div: 32.43 degrees, 740.00 ps skew, 3.66 Vpp

5 ns/div: 32.38 degrees, 750.00 ps skew, 3.66 Vpp

10 ns/div: 31.97 degrees, 756.04 ps skew, 3.66 Vpp

20 ns/div: 33.09 degrees, 742.86 ps skew, 3.66 Vpp

50 ns/div: 32.38 degrees, 761.54 ps skew, 3.66 Vpp

100 ns/div: 32.67 degrees, 774.44 ps skew, 3.66 Vpp

200 ns/div: 32.87 degrees, 781.45 ps skew, 3.66 Vpp

500 ns/div: 32.98 degrees, 781.45 ps skew, 3.66 Vpp

1 us/div: 32.29 degrees, 781.45 ps skew, 3.66 Vpp

50 us/div: 33.18 degrees, 878.07 ps skew, 3.66 Vpp

100 us/div: 32.60 degrees, <unstable>, 3.66 Vpp

200 us/div: <n/a>, <n/a>, 3.66 VppNOTE 1:

Without averaging, the skew measurements start flipping around all over the place at 100 ns/div and longer timebases. At 50 ns/div and shorter, the skew values remain in the expected range of values (given the jitter specs of AWG, I think).

Even with averaging on, the skew values start having trouble at 100 us/div. It ranges around between 0.4 and 5+ ns, both positive and negative values. Not sure why it has trouble however, because it is still at 500 MSa/s at this point. Stopping the scope and zooming in, the signals and relative spacing look ok.

The case 2 phase measurements are ok all the way through 100 us/div, with or without averaging. Further increases in time base drops the sample rate to 250 MSa/s, 100 MSa/s, etc. so I think aliasing prevents correct measurements of this frequency anyway.

NOTE 2:

For Case 1, when averaging is off the skew measurements do NOT vary wildly like in Case 2, all the way up to 100 us/div. The values are still wrong in Case 1, but they are stable.

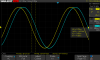

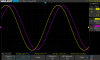

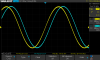

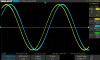

Case 3:

Case 3:This case shows two separate problems.

1. Each time I disable and re-enable that third channel, the phase difference between the two waveforms

changes randomly! Both displayed and auto-measured. Assuming ~32 degrees is expected, I can get it to vary between 24-54 degrees without doing anything else, demonstrated in the pics below.

2. The amplitude of both waveforms changes when I enable a third (unused) channel, from ~3.33 to ~3.66 V (10%). I think 3.66 V is the correct one, but I technically don't know for sure since I lack any other test equipment to valildate against.

I collected more info, but got bogged down with so many permutations of quirks I found, so I thought I'd make this initial post first and see where it leads.