Sounds like you expect resolution of the meter and accuracy to be the same. However none of meters can do this, but it does not mean that even uncalibrated 8.5d is totally useless.

Take 3458A/02 or fancy 8588A, same story, they will not provide you even 7.5 digit accuracy. Yes it is useless to measure unknown DUT source value to determine 8.5d value, but it's still very useful to measure unknown DUT source stability or difference between DUTs.

Numbers you see on calibration report are valid only at the time of the calibration. 0.08 ppm from +10V point only means that at time of measurement, in calibration lab the average deviation between source (+/-5.4 ppm) and meter (+/-12.9 ppm) was that value. It does not mean meter is 0.08 ppm accurate on it's 20V range. 19V point already reveals you exactly that, where difference becomes -0.36 ppm. There are multiple error sources inside the meter and overall calibration procedure. Another important contributor is INL errors. This is where metrology 8.5d meters really shine, allowing you to transfer known external standards to unknown DUT voltages with sub-ppm

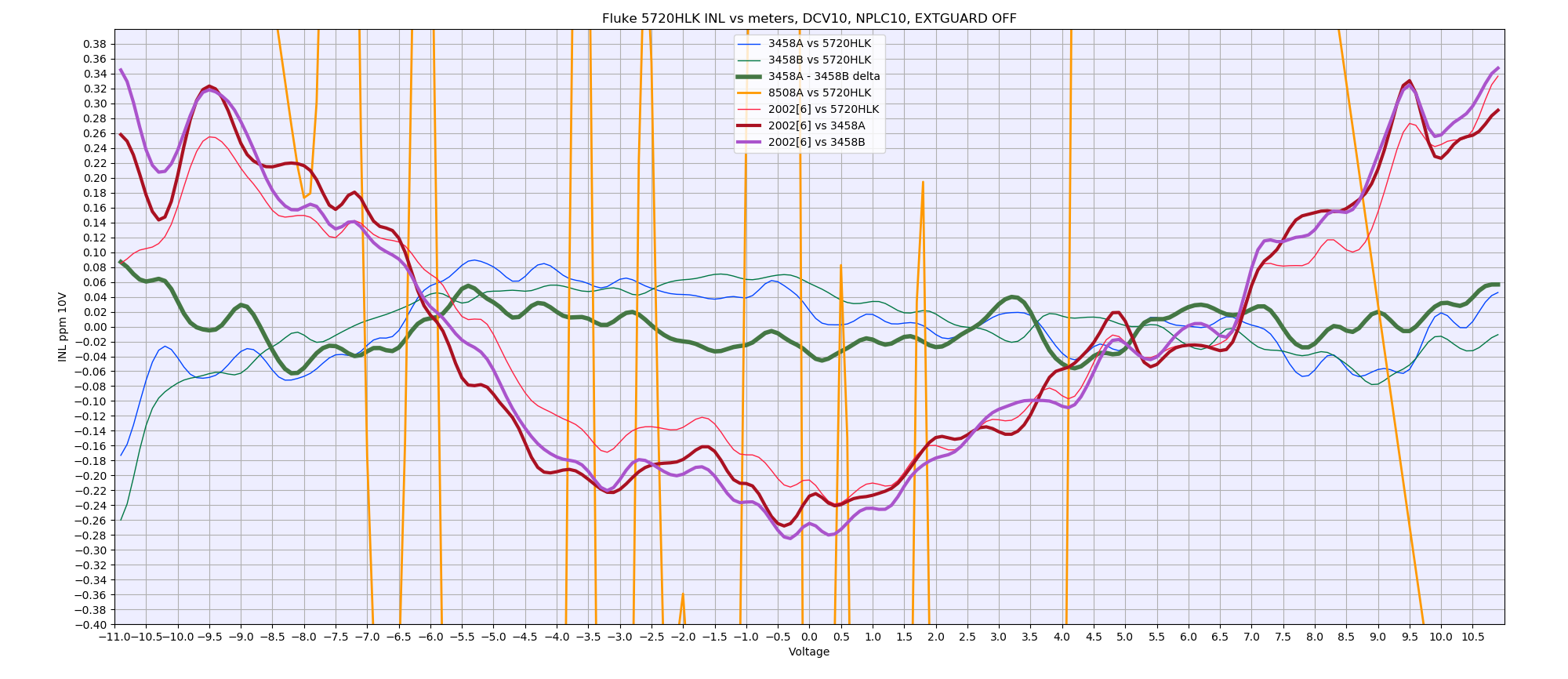

accuracy. Perhaps INL test I've performed with 2002 earlier this year could indicate better than thousand words:

Based on this chart, we can expect my particular K2002-GPIB6 to be able transferring VDC values on 20V range with better than +0.4 / -0.3 ppm error. Two 3458A in tandem can do same transfers with better than +/-0.1 ppm error. You can also do such 0.1 ppm transfers using 1980's technology such as Fluke 720A + nullmeter + DC reference. However 8.5d meters and elaborate KVD setups are not the only ones that allow such transfers, even some selected and characterized 6.5d meters can reach similar performance too. Fellow Volt-nuts here in forum already demoed this. But it does not mean these meters are specified as such or legally calibrated to do so.

(Also this is a reason why I don't understand Fluke marketing in regards to 8508A and new 8588A/8558A, which do NOT specify linearity at all

).

If we verify that 2002 is stable (by measuring known stable source, such as DC reference with known drift), one can than use such uncalibrated 2002 to test two different DC references and obtain difference between them with better spec than if one just measure DUT directly. Such ability enable volt-nut to build infamous LTZ1000 reference, measure its stability with unknown K2002 (caveat = assuming meter is also stable) and ship that uknown value LTZ1000 reference for calibration, to obtain total 20VDC range error of the reference

and the meter, because now you know output value of the reference. If such reference get better uncertainty than K2002 spec, you can calibrate K2002 for that voltage range as result.

Single point (in time) calibration is not very useful, multiple periodic calibrations are required to figure out DUT stability (real drift specification over calibration interval). Given that calibration for top-end meters expensive and only few labs can do it up to instrument specification, volt-nuts often opt as result not to adjust their meters but to collect error values (12.9 ppm in your example) and make sure that error value is less than unit specification for desired calibration period. Alternative to this is to do your own calibration or calibration adjustments against higher end standard, such as your +/-2 ppm standard. However another culprit of K2001/2002 units - they will not let you adjust only one range easily, so you end up needing high-end calibrator, even if you have JVS system at home

Serious calibration labs will not adjust your device, even if it is outside of the spec. Simple example - you have 732A DC standard, that monitored every day over 5 years. So you have 5 years of the data history. You ship it to lab, they measure it, find it outside of the spec. Adjust it (turning the trimpot) to their standard (history of which you do NOT know). Now this lab invalidated 5 years of your effort, and you need to collect new data for many more months, before you can figure out if drift rate remained the same as before adjustment, or changed (e.g. trimpot contact resistance variation/suddle current difference impact/etc.). Then you still need to apply math juggling to align "old" data and "new" data. That is lot of trouble, for no clear benefit.

To be fair, most voltnuts care less about calibration and traceability, because lot of work and money required to maintain this, and it make no sense for hobby level lab at home. Unless you crazy enough to own 4708/4808/5700A/5720A and bank of in-spec standards to calibrate 5720A, which you use in turn to calibrate meters like K2002. Then you can do tests 24 hours, every day, and it "save" money on shipping meter to calibrations outside. I treat 2002 as my secondary check meters, and monitor them once in 6mo or so. If I get readings deviation to my primary meters larger than 24 hour spec, I get worried.

And yes, calibration labs that just test your DUT with uncalibrated source and just print a sticker for front panel do exist. Because I don't feel comfortable playing shipping game for expensive meters, and limited choice of local capable labs, doing in-house calibration is my choice in this story.